As Revealed through Pueblo Indian Architecture, History, and Tree Ring Lab Time Series.

Robert W Endlich

INTRODUCTION

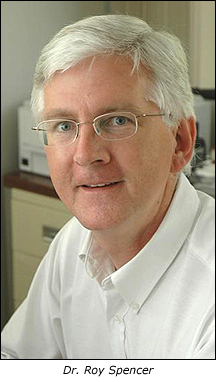

During the presentation at the 21 March 2026 CASF meeting, Bill Gutman highlighted brief segments from the Joe Rogan Experience video which featured comments by Dr. Will Happer, emeritus Professor of Physics from Princeton, and Dr. Richard Lindzen emeritus Professor of Earth Atmospheric and Planetary Sciences from the Massachusetts Institute of Technology, https://open.spotify.com/episode/3ihn2aHDz9dyZU0T3Kylk3

The presentation featured several minute-long video segments, following which Bill Gutman paused the video, and asked topical questions to evoke discussion of the content by meeting attendees.

This post pertains to a couple of these segments, specifically the generally Continue reading “Some Comments on Drought in the Southwest U.S.”

Key Points:

Key Points: